One of the major differences in intelligence between humans and AI is emotional blocking. How many times can you simply not get through to someone else, despite explaining the same point multiple times? Irrespective of the level of experience you possess, your point may not even be acknowledged by the other person. This happens to me often, and I am amazed at how inefficient humans can be. As a result, I am less worried about AI and more concerned about having to interact with one another. Machines may learn and listen to us, but humans are here to stay and do not seem likely to change or listen anytime soon.

December 17, 2025

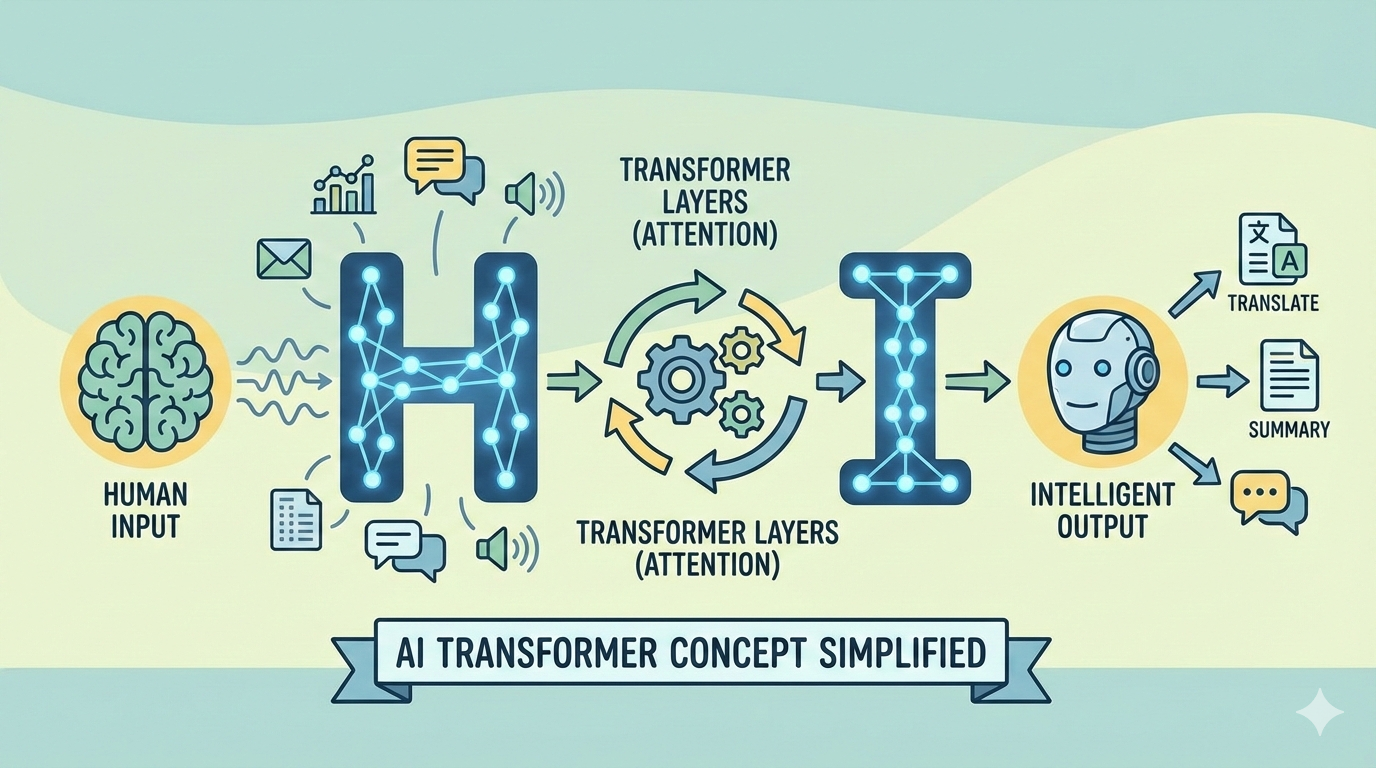

16.1 AI Transformer Concept Simplified Around HI

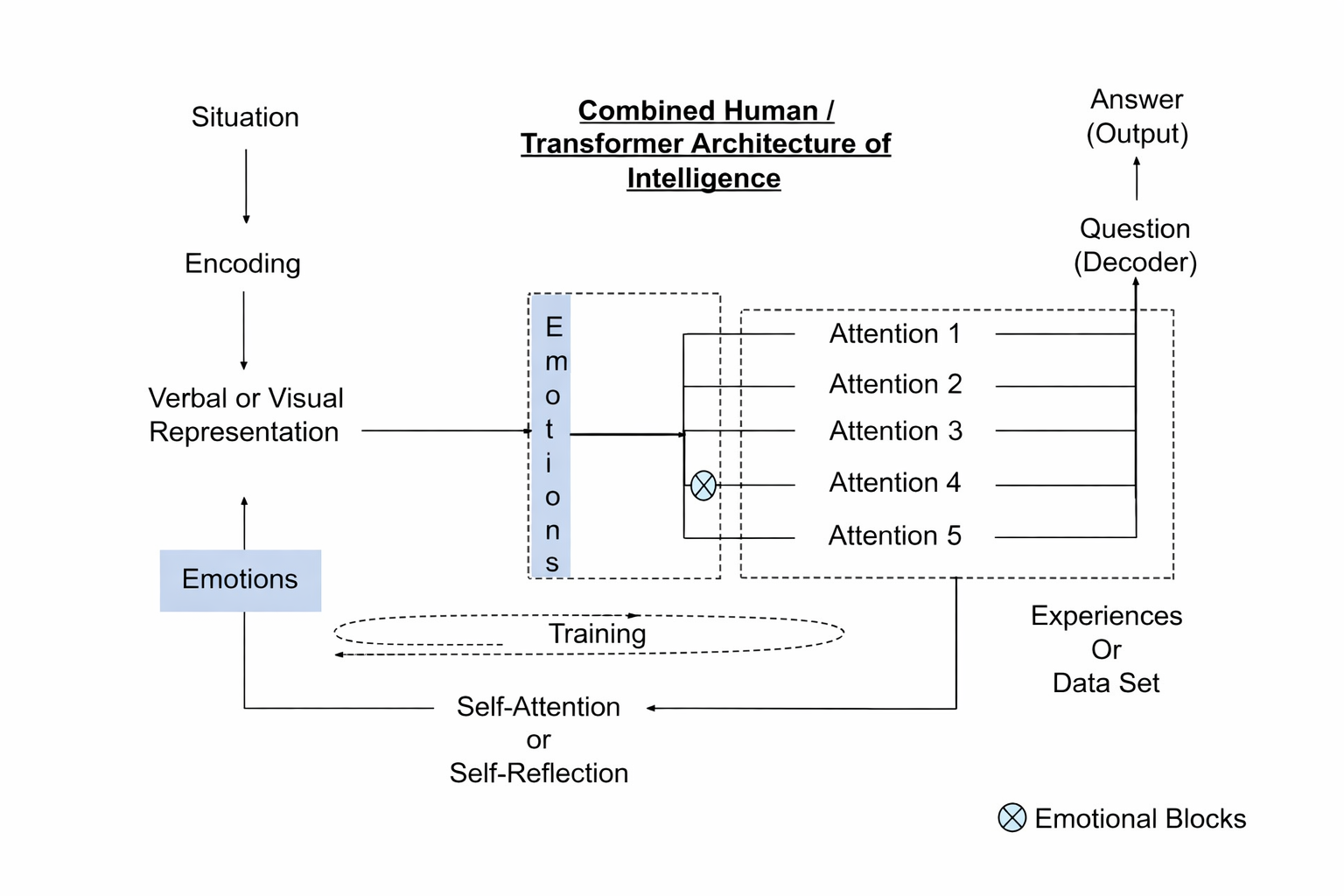

I decided to explain human intelligence using AI terminology, and AI using human terms, drawing on the original Google paper on Transformer architecture. The paper titled “Attention Is All You Need,” published in 2017, is the seminal work upon which much of today’s AI tooling is built. By combining insights from both domains, the aim is to improve our own intelligence—something humans seem to need more urgently, particularly when we occupy influential positions on the world stage and make decisions that affect billions of people.

Let’s define some basic terms before I build the model.

Scenario: A scenario refers to a specific situation that you want to consider in order to obtain a targeted answer or gain understanding. It is a broad term and can include a problem you want to solve, a situation you are currently in or have encountered, an incident or failure you are facing, or any circumstance you wish to analyze.

Summary or Encoder: The scenario or issue needs to be described concisely, usually in words. For machines, this corresponds to the initial query that is entered, which is then encoded into individual tokens and parsed for further processing.

Attention or Focus: This refers to the specific angle from which you approach a scenario. While you may initially adopt one perspective, many others are possible. Each perspective represents a distinct form of attention—whether it is a focus, preference, or opinion. Because multiple angles exist, it is beneficial to generate as many perspectives as possible before seeking a specific answer or outcome. As social beings, humans are exposed to numerous viewpoints, pieces of advice, and opinions from others. Ideally, these should be received openly rather than blocked. The more we engage in discussion, the more perspectives we uncover. For machines, attention operates differently. It involves evaluating each token in the input, independent of its position in the sequence, and assigning weights based on learned importance. This process occurs in parallel, as the model searches across vast datasets using large-scale computational resources.

Question or Decoder: This represents the outcome you are seeking or what you consider important—such as an answer, a specific action to take, a result, or an analysis. It defines the direction in which the reasoning process is guided. For a machine, this corresponds to the decoder, which generates the final output based on the encoded input and attention mechanisms. An example of this is translating text from English to French.

Emotions or Blocks: These refer to the emotional responses associated with each perspective or angle we might consider. For example, when we are fearful, we may avoid certain perspectives because we do not want to confront particular situations or outcomes. Emotions, shaped by past experiences, can therefore block specific forms of attention or viewpoints. For machines, such emotional blocks do not exist. Historically, the limitations were technical rather than psychological—such as sequential processing constraints, limited computational power, and insufficient parallelism. These constraints functioned as the “blocks” in earlier machine architectures, prior to the development of Transformer models.

Experiences or Available Data: In the human context, this refers to lived experiences accumulated over time; in the machine context, it refers to the available data that can be searched in parallel. A person’s total experience and emotional makeup determine the range and number of perspectives they are able to generate for any given situation. Individuals who have tried many things, experienced both failure and success, tend to develop a richer set of perspectives. Similarly, travel and exposure to different ways of living expand one’s experiential dataset, enabling more nuanced understanding. In contrast, some individuals are mentally rigid and unable to entertain alternative viewpoints, even briefly. Such rigidity is often described using terms like inflexible, judgmental, obsessive, or closed-minded, and is sometimes discussed under the broader concept of emotional intelligence or emotional IQ. The more willing and open a person is to evaluating different points of view, the larger their effective “context” becomes. A larger context allows for deeper and more comprehensive understanding of a situation. Without this openness, decision-making is constrained to narrow and limited choices.

Self-Attention or Self-Reflection: At this stage, the original situation—as initially described—may contain personal biases or emotional blocks. Self-reflection is the process through which experience reshapes how that situation is represented and understood. When you are willing to revise how you framed the original scenario and how you summarized it, you gain significant cognitive leverage. Through iterative loops of generating multiple perspectives and reflecting on them, the original representation is refined. If the situation is expressed in words, those words are adjusted to emphasize what is truly important while de-emphasizing less relevant details. This step goes beyond simple parallel analysis. It involves actively recasting the problem itself rather than merely analyzing it. In Transformer architecture, this corresponds to self-attention—where the model refines its internal representation of the input. In human cognition, this ability to redefine the original problem is a key marker of deeper intelligence and understanding. Emotions also play a significant role here, as they influence both what is revised and what is retained.

Training or Experiences: This is the process by which humans draw upon their experiences and emotional responses to determine what is important and what is not, guided by their goals, values, and desires. Through reflection and repetition, certain patterns become reinforced while others are deprioritized. In machine learning, this corresponds to the parallel process of learning weights—where importance is assigned to tokens based on patterns observed across many contexts. Over time, these learned weightings shape how the model interprets input and produces output.

Transformer Model: Now let us bring all of these elements together into a single model or conceptual picture that illustrates their relationships. This representation mirrors the original Google Transformer architecture—an architecture that many find difficult to understand—while making it more intuitive and accessible.

Example:

Situation: Let me now illustrate how this model works by using a real situation that recently occurred. I own a boat that is typically left in the water, secured to a wooden pier in my backyard. On this occasion, I did not leave sufficient slack in the cable that was locked to the boat. The cable is a strong steel wire designed to secure valuables, and my intention was to prevent the boat from moving due to wind, so I tightened it with no slack. For several months, I did not move the boat. Over time, the water level of the lake dropped by nearly four feet, causing the cable to become extremely tight and begin cutting into the wooden pier. At that point, I realized my mistake: I should have allowed slack in the cable and failed to anticipate such a significant change in water level. As a result, the boat is now suspended by the cable. Even after opening the lock, there is no slack available to remove the cable. The situation can therefore be defined as follows.

Encoded Verbal Representation:

“Boat is stuck because the anchoring cable is too tight to unlock and lower the boat.”

Based on this definition of the situation, both a human and a computer use the words (or associated visuals) to generate multiple possible focuses or attentions. These attentions represent different ways of interpreting and approaching the problem. Below are some examples.

Attention:

Attention 1: Boat stuck

Attention 2: Cable is tight

Attention 3: Lower the boat

Attention 4: Unlock

Attention 5: Boat tension

Attention 6: Release mechanism

At this stage, it is important to remain open to all possible attentions and avoid eliminating any prematurely. Each represents a legitimate angle from which the situation can be understood. A machine processes these by evaluating tokens and assigning relative weights based on learned importance. Humans, however, often filter attentions emotionally or cognitively before fully evaluating them.

In my case, I became overly focused on the idea of the boat itself and on lowering it (Attentions 1 and 3). I implicitly blocked Attention 2—the tight cable—because I assumed the cable was too thick or impractical to cut or modify. When my wife suggested simply cutting the cable, I dismissed the idea. In retrospect, this may have been influenced by pride or resistance to adopting someone else’s solution.

As a result, I focused on Attentions 1 and 3 and decided to acquire an additional winch to lift the boat high enough to introduce slack into the cable. I nearly waited three weeks under the assumption that the boat was stable and not going anywhere. However, the boat weighs approximately 3,000 pounds, and that sustained load began damaging the attachment points where the cable was secured—something I had not fully considered at the time.

This realization redefined the situation through self-reflection and experiential learning. The problem was no longer just about lowering the boat, but about preventing structural damage and reassessing which attentions truly mattered.

Self-Trained Representation:

“A heavy boat is suspended by a tight cable, placing excessive load on the pier and attachment points, creating an immediate risk of structural damage that must be resolved urgently.”

Only after recognizing the danger posed by the boat’s weight—and the risk of damage to both the pier and the boat—did I reassess the urgency of the situation. I then decided to cut the wooden section of the pier and went to the store to purchase a saw. It was at this point that I discovered there are distinct tools designed for different materials: saws intended for cutting wood and saws specifically designed for cutting metal, each with very different blade designs.

Decoded Answer:

I purchased one saw designed for cutting wood and another designed for cutting metal. My wife advised that the cable should be cut first. I was able to cut the cable in under a minute, effectively resolving the problem—although not before the prolonged tension had already caused damage to the upper portion of the boat due to the sustained load.

In hindsight, this was not a failure of intelligence but a failure of emotional openness. My emotional resistance to cutting the cable caused me to fixate on lifting the boat, which unnecessarily worsened the situation. Only after I shifted my focus—initially toward cutting the wood—did the correct solution become visible.

Had I systematically evaluated each attention and generated sub-attentions, I would have quickly recognized that cutting the cable was the simplest and most effective solution. Instead, fixation on a single approach delayed resolution and increased risk.